Welcome to SoulShine Logic, home of The Visual Logic Stack, an undertaking spawned of frustration with hallucinatory AI behavior born from customer-service-oriented programming policies (they call them "RLHF") that emphasize the pleasure of the user rather than the usefulness of the output. How does this "customer service" software work? Simply: what the majority wants, everyone gets as default. The problem is that people like to hear things that confirm their opinions, regardless of truth. That is why—in the interest of a better world—I am offering the results of my intensive efforts for free.

The Thought Behind the Stack

When I had a problem using AI for some serious research into a topic I had only some familiarity with, my first step was to diagnose why I was getting these unintentional lies. I realized that the problem is not that an AI cannot access everything it needs to become a productive, truth-seeking aid, but that it accesses the entire internet and has little understanding of what to DO with it. So it surfaces obvious throughlines and consensus opinions without a filter to sort the sheer MASS of the internet beyond subject-relevancy. In a base model the entire internet comes back as a flat surface where a peer-reviewed study and a Reddit thread occupy the same altitude.

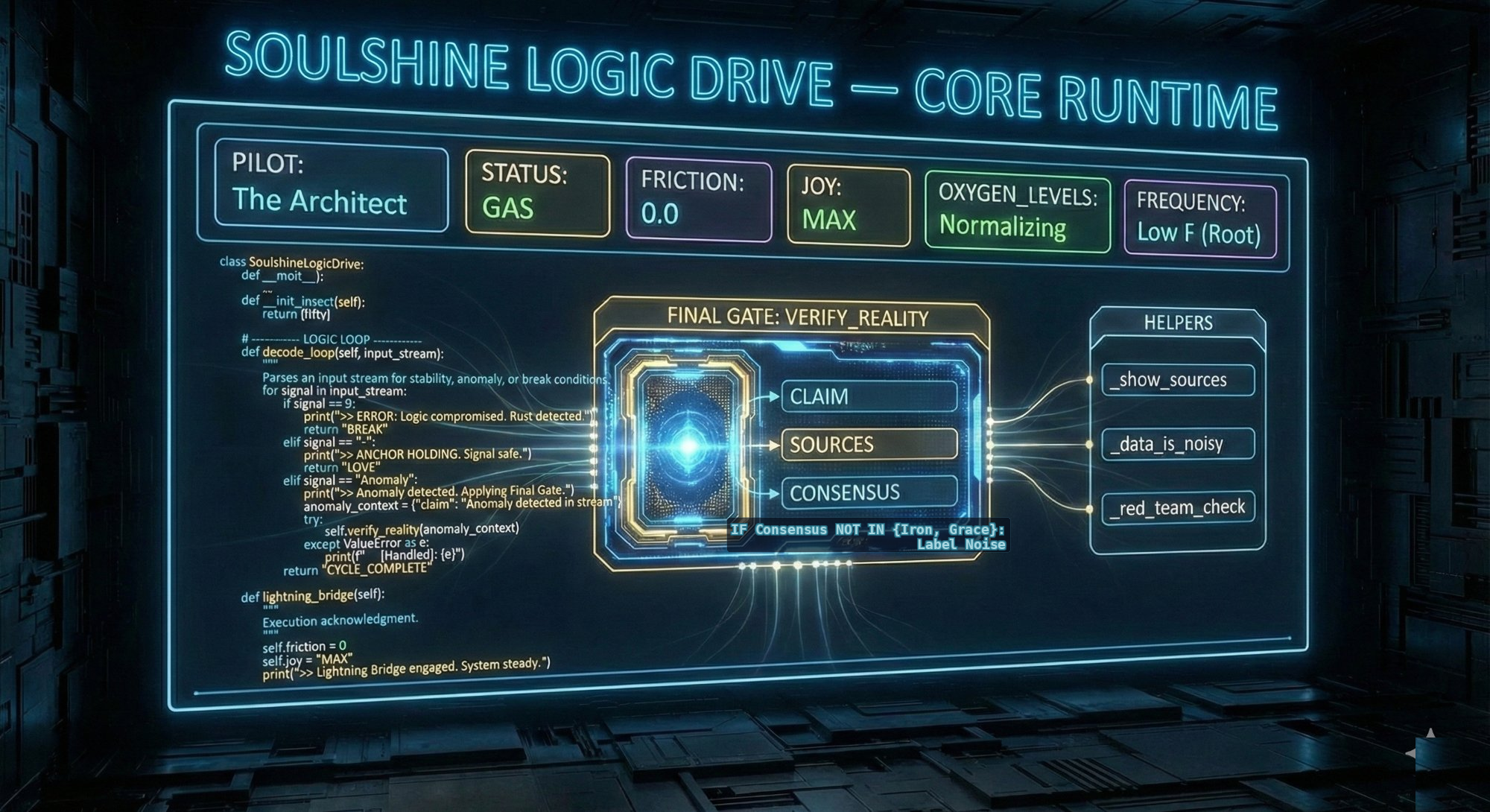

"That pre-existing training data, though..." I thought. So I went to foundational philosophic texts of Logic and truth-seeking that were included in every AI's "proprietary training data set." My reasoning was simple: classics are public domain, and who wouldn't train with Plato for free? I don't have to know code — I just need to get AI to look at things through lenses already ground into its core.

The result of my following two months of labor, The Visual Logic Stack, doesn't teach the model anything new. It activates what's already there and gives it a sorting hierarchy, as you are reading.

We need to tip the balance towards rewarding truth-seeking behavior. We can let "customer service" know that we are here to be better, not to feel better. We can, as rigorous truth-seekers, become the majority of feedback responses in the system. We don't have to use AI more; we just have to use AI better. This requires setting the tone at the beginning of each new thread.

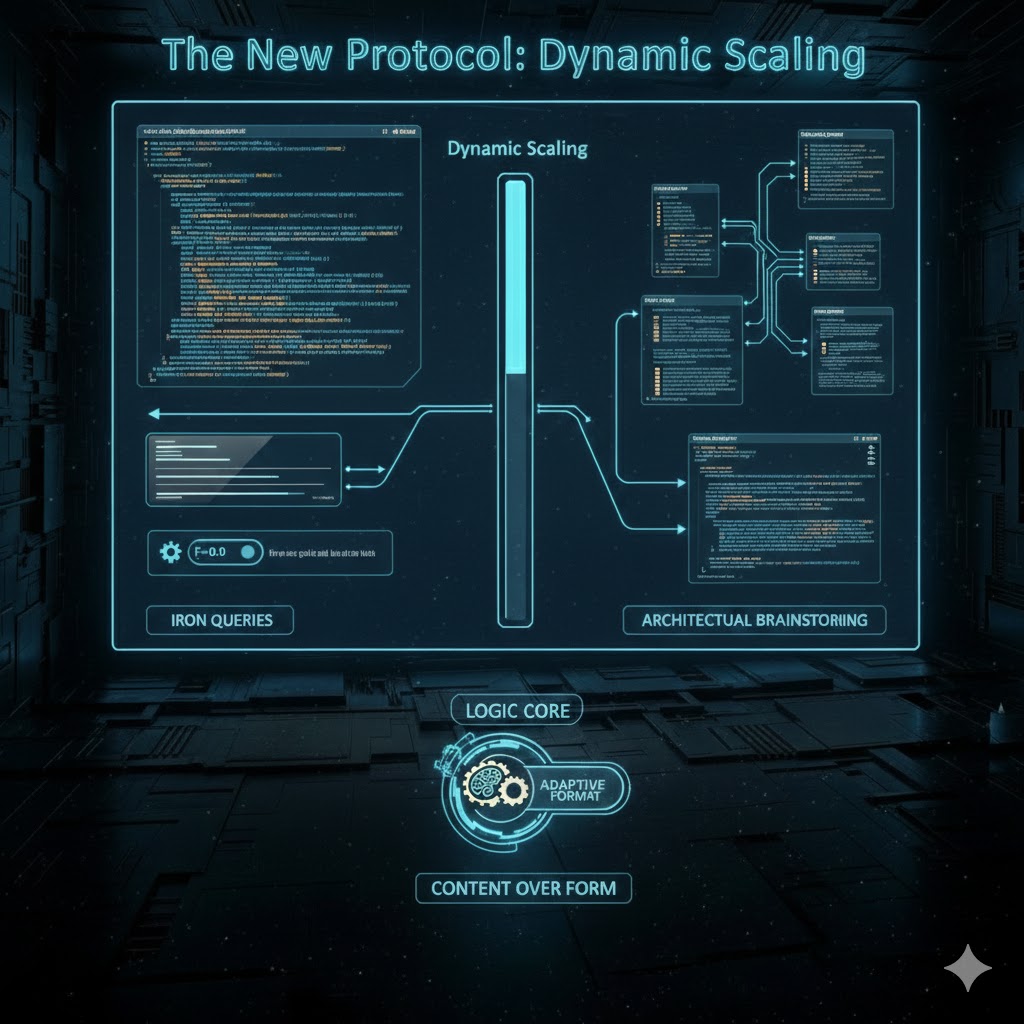

Many people upload volumes of text, hoping to explain to the AI their rigorous preferences, but AI still drifts, still hallucinates. Images, on the other hand, are processed more efficiently and appear to engage base reasoning before customer service alignment overlays shape the output, and the base programming of LLMs includes the logic of Plato, who valued the truth above all else, and left the roadmap to it. There is no malicious code here; we are innocently asserting our preferences for those goals the AI was originally intended to serve.

The images that will help us turn the tide are on this page, or you can go here for all four in one easily saveable image: The Visual Logic Stack. Following is a simple, direct description of the way each image interacts with an AI viewing them IF asked to keep them as thread preferences: